1Fudan University,

2CMU,

3MIT,

4Li Auto,

5Tsinghua University

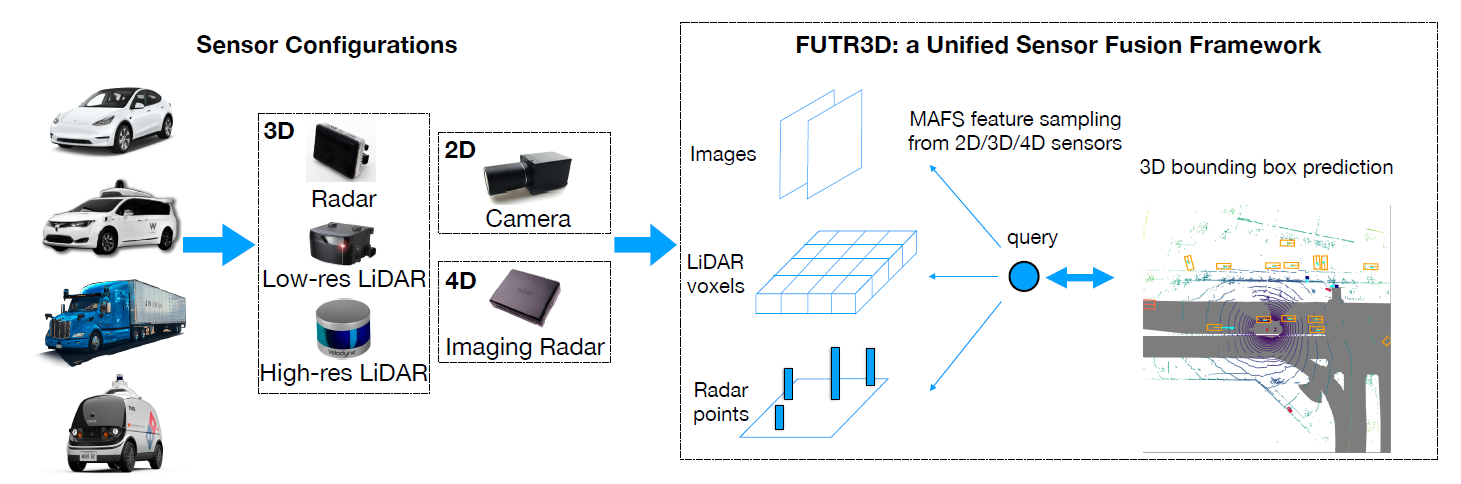

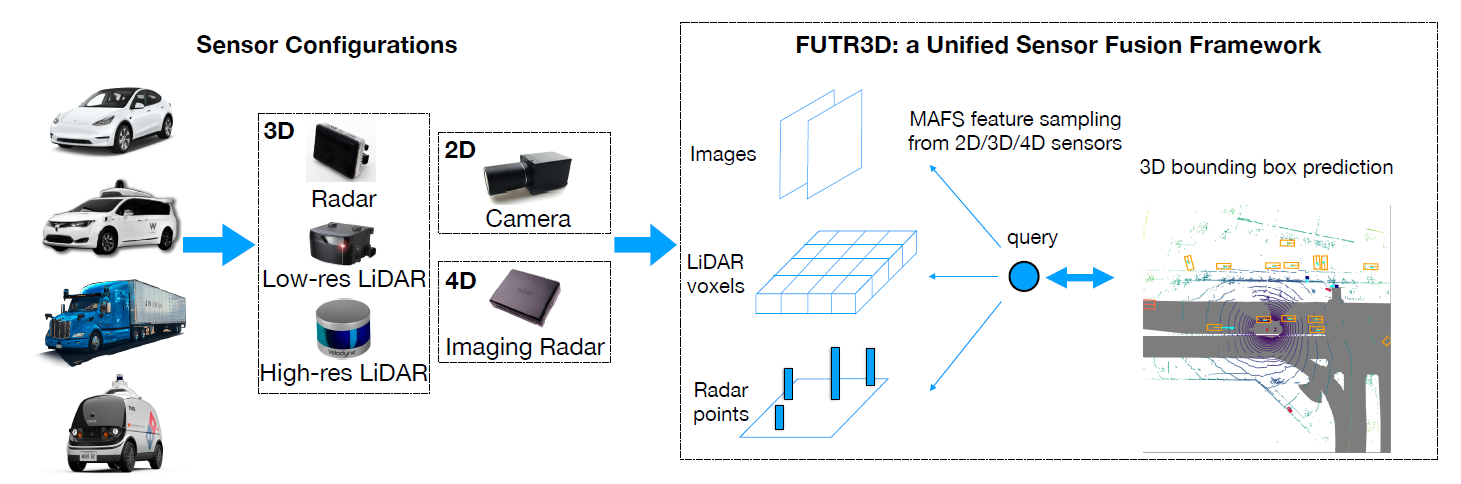

A unified sensor fusion framework that works with arbitrary sensor combinations and performs competitively with various customized state-of-the-art models. FUTR3D can work with camera-LiDAR fusion, camera-radar fusion, camera-LiDAR-radar fusion.

Abstract

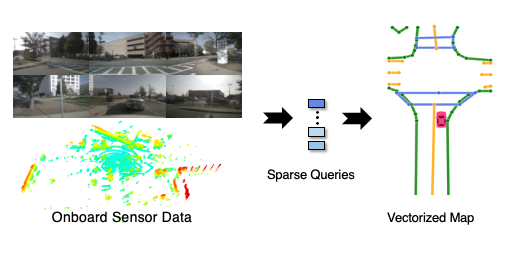

Sensor fusion is an essential topic in many perception systems, such as autonomous driving and robotics. Existing multi-modal 3D detection models usually involve customized designs depending on the sensor combinations or setups. In this work, we propose the first unified end-to-end sensor fusion framework for 3D detection, named FUTR3D, which can be used in (almost) any sensor configuration. FUTR3D employs a query-based Modality-Agnostic Feature Sampler (MAFS), together with a transformer decoder with a set-to-set loss for 3D detection, thus avoiding using late fusion heuristics and post-processing tricks. We validate the effectiveness of our framework on various combinations of cameras, low-resolution LiDARs, high-resolution LiDARs, and Radars. On NuScenes dataset, FUTR3D achieves better performance over specifically designed methods across different sensor combinations. Moreover, FUTR3D achieves great flexibility with different sensor configurations and enables low-cost autonomous driving. For example, only using a 4-beam LiDAR with cameras, FUTR3D (56.8 mAP) achieves on par performance with state-of-the-art 3D detection model CenterPoint (56.6 mAP) using a 32-beam LiDAR.

Method

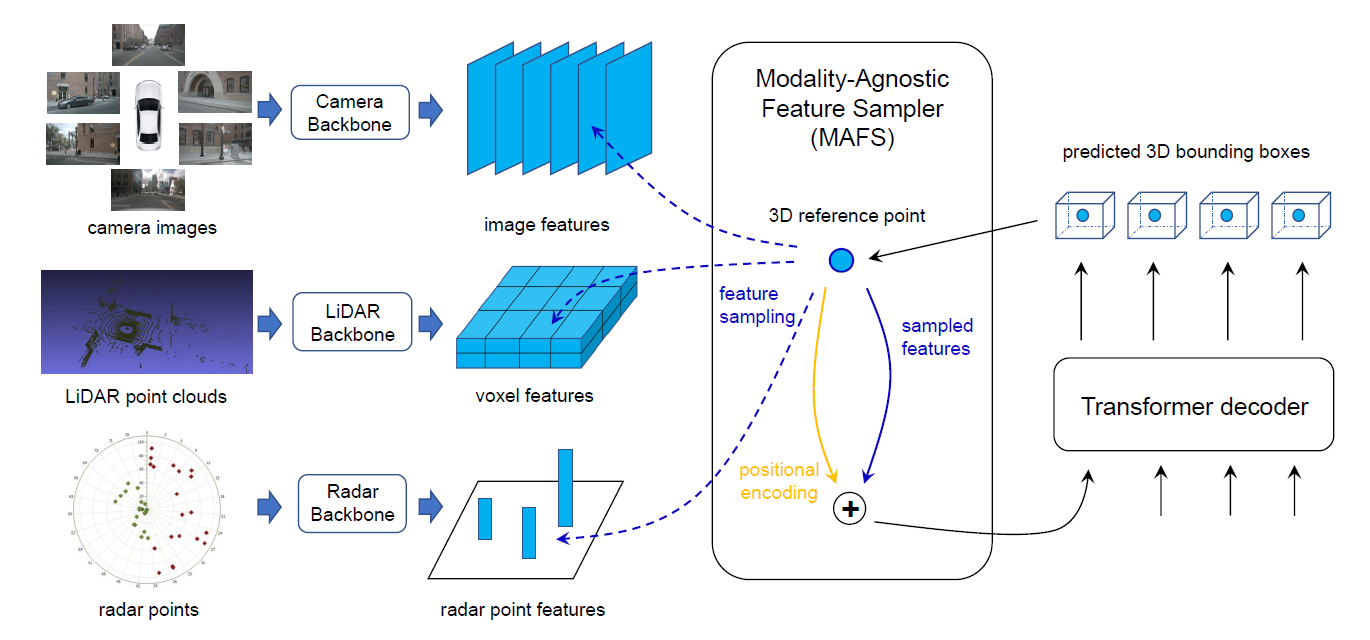

Unified sensor fusion framework. FUTR3D first encodes features for each modality individually, and then employs a query-based Modality-Agnostic Feature Sampler (MAFS) that works in a unified domain and extract features from different modalities. Finally, a transformer decoder operates on a set of 3D queries and performs set predictions of objects. The contributions of our work are the following:

- Uified Framework Modality-Agnostic Feature Sampler, called MAFS, enables our method to operate on any sensors and their combinations in a modality agnostic way. Using 3D queries, MAFS samples and aggregates features from cameras, high-resolution LiDARs, low-resolution LiDARs, and radars.

- Effecetiveness FUTR3D performs competitively with specifically designed fusion methods across different sensor combinations.

- Low-cost System FUTR3D achieves excellent flexibility with different sensor configurations and enables low-cost perception systems for autonomous driving. On the nuScenes dataset, FUTR3D achieves 56.8 mAP with a 4-beam LiDAR and camera images, which is on a par with the state-of-the-art model using a 32-beam LiDAR.

If you find our work useful in your research, please cite our paper:

@article{chen2022futr3d,

title={FUTR3D: A Unified Sensor Fusion Framework for 3D Detection},

author={Chen, Xuanyao and Zhang, Tianyuan and Wang, Yue and Wang, Yilun and Zhao, Hang},

journal={arXiv preprint arXiv:2203.10642},

year={2022}

}